A few weeks ago I visited Amplidata together with my friend Howard Marks (@DeepStorageNet). Amplidata is one of the very few Belgian companies that are a true Storage Vendor and it is the only one that has realized this with Venture Capital from ‘the Valley’ (ie Intel). So if people from the Valley are willing to invest money in a Belgian company it might be worth having a look. When Howard told me he’d visit Belgium for a project there I had no reason not to join 🙂

What is Object Storage:

I personally categorize storage in 4 main areas: Block / File / Object / BigData. I categorize BigData differently just because it spans all of the others but however has it’s own ways of dealing with the data (big data != a lot of data). The differences of the other three are based on the protocols used. Block: SCSI protocol(s), File: NFS/SMB(1/2/3/CiFS)/…, Object: API’s (mostly over TCP-IP). In this case Amplidata uses REST API between the apps and the storage. Another aspect of Object storage is that you store the metadata of the file with the file and together this is becomes an “object”. Example: a picture has multiple parameters like type of camera, gps location, timestamp, size, photographer, … all this info can be stored with the file in metadata for intelligent querying afterwards without the need of a catalog/database.

Object storage is mostly used in larger environments and have a distributed type of architecture for protecting the data; holding multiple copies and longterm archiving for bigger files. One of the industry leaders in object storage is Caringo with it’s CAStor product (also wth Belgian roots btw). CAStor has it’s own distribution channel but is also available in OEM through A-branded vendors (ie DELL DX6000). Technically CAStor has a N-copies protection model. A file is stored on one disk and then copied to other locations: other disks, storage nodes or sites. Example: 4 copies of 1 object of which 2 on different nodes in site A and 2 on different nodes in site B

What’s Amplidata’s core technology:

The main difference between Amplidata technology and other object storage providers is that Amplidata objects itself are not replicated but the objects are cut in a lot of small pieces (chunks) with an advanced security math on top (Erasure codes) and then spread across multiple disks and nodes. Why this method? Because the security stays with the data itself, not by extra parity parts. Don’t get it yet? Let me show you by example:

In the first example you see an object (file+meta) “ABCDEFGHI” stored in a classic N-copies (3 in this case) object strategy. If you look close you’ll see that the entire file is written to 1 disk of 1 storage node and could be distributed to 1 disk per copy, whether it is in the same node for disk failover or on another node for disk+node failover.

In this second example I’ll show you how Amplidata stores the object. The file is divided in CHUNKS with a fixed size. These chunks are then stripped down again in an amount of parts, called SHARDS. Shards are 1/4096th piece of the chunk + ECC. In this specific case we have an object “ABCDEFGHIJKLMNOPQR”. The object is split in chunks of xMB which are divided in 4096 shards and then distributed over for example 9 disks. The orange part is the overhead for ECC. I’ll explain failover in the next part.

Failover Basics/Mathematics:

Actually it is pretty simple. A protection policy has 3 basic characters: the size of the chunks, the amount of disks for distribution and the amount of failures. The higher your failures/disks ratio, the bigger your ECC part will get.

Example: We have a file of 1228MB and a Policy of 32MB chunks / 16 disks / 4 failures. This means we will get 38 chunks of 32MB (and 1 of 12MB at the end), which are always divided in 4096 shards. The bigger the chunks (ex. 64MB), the bigger the shards (would be 16KB). Now it is time to do some failover. We asked for 4 failures on a total of 16 disks so we will get 6720 blocks containing data + ECC coding. As you see in the example on the right we never just save parts of the data, only calculated results after processing. Now comes the tricky part: when reading the data we need ANY 12 out of 16 disks to read from just because of that distributed calculation model.

note: you see I used chunks & shards and in the same lines you see super/message/check blocks. The first 2 are universal terms, the last are Amplidata’s terms.

Benefits:

Besides a huge difference in overhead the real storage people out there will already have seen another big benefit: THROUGPUT! You are writing/reading to/from a lot of spindles on as much as possible nodes. Not just 1 spindle on 1 node at the time.

This is what Howard came to do in Belgium. He ran some benchmarks against the whole thing and his results were amazing (in comparison to others). Read more about the review in the link at the bottom of this page.

Architecture:

I knew before I went to Amplidate HQ that they were selling the products with their own hardware. At first I thought this was really wrong. If you are a startup you should be focussing on the software. However … this is what they showed me onsite:

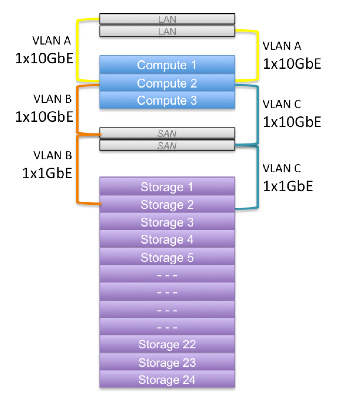

And then there is the physical scale-out architecture. The system has compute nodes and storage nodes. The one I saw that Howard tested was a 3 compute + 24 storage node configuration. There is no specific ratio of compute per storage and there is no theoretical limit. The compute nodes have 2x10GbE uplinks and 2x10GbE downlinks to the switches. The storage nodes all have 2x1GbE link to the switches. The communication between the compute and storage nodes is an Amplidata proprietary IP-protocol.

The compute nodes handle the breaking objects down in chunks/shards and distributing to the storage nodes. The storage nodes however are not JBODs! They handle some stuff on their own like background scrubbing. Scrubbing is a technique where you look at all the physical data and confirm if it is still good or not and rewrite to other blocks if not.

My Take-Aways:

As usual I share some of my personal insights here.

- We already know that when you have A LOT of data, block storage is not going to be of any help. Even filesystem storage has its limitations. Object storage is not new but now it really gets some traction. Imagine those billions of facebook pictures, youtube movies, MRI scans with very high resolutions, … From what I have seen up until today, there is no “best solution” build yet. And if it has been build, it might just have been a purpose build solution that is not good enough for a broader use.

- I like this one. They have a few years of lag on some others in the industry but they played it smarter from a technology standpoint. I just hope it’s enough to be disruptive. Don’t get me wrong here; this is not R&D only. This is already Generation 2 and runs in production in pretty big environments.

- Amplidata has a multi-rack, multi-site approach that I didn’t cover yet (blog was already long enough). It gives way less overhead than the competition but there are still some downsides I want them to tackle first. One of those things is that even if you have enough chards in site A to recover the object (data+ECC) it will retrieve all chards available from other sites to just read the object. This would not affect the performance to much but it will eat your bandwith unnecessary.

- Amplidata should be in the next Storage TechFieldDay! The delegates would love it!

Press release announcing new CEO + $6M new venture capital.

Press release announcing the review by Howard Marks (will come when available)