Infinio is just one of those small startups you just cannot love enough. Most of that is actually due to their great choice of people. From CEO down to localised sales engineers or one of my personal best friends in this industry Matthew Brender (@mjbrender)

Infinio 2.0

Infinio launched last year with a storage accelerator product. The short elevator pitch is basically that you’d deploy one small appliance per VMware host that uses 8GB of your RAM to offload NFS-read requests, through a distributed caching pool.

Today Infinio is announcing Infinio Accelerator v2.0. You can follow a deepdive about the updates on Wednesday through their presentation for TechFieldDay. But I had a brief chat with Matthew yesterday and thought I’d share some more information to go with the press release.

SAN & Unified Storage Support

From an early startup perspective, chosing NFS as the base protocol to start with was OK. As of v2.0 we are going to see support for as good as all protocols available. We are talking:

- NFS

- iSCSI

- FC

- FCoE

- FCoTR*

The only one I can still think of that is missing would be local storage. I’m talking about a hardware RAID 5 of 6 10k disks in the server that shows up as a big local volume here.

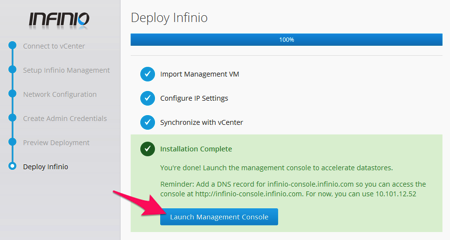

Practically you deploy your Infinio controller through an OVA manually or you could use the Windows executable that will do that for you. When that is done, you’ll be asked to go to the Management Console where you get the question which volumes to pick to optimize. Previously, just to avoid confusion, only the NFS volumes would show up. Now you’ll see practically all your volumes here.

Application level Reporting

Looking at what their first customers are doing with the product made clear that users were longer in the management console than necessary because there also was information about what actually is going on in the environment. As a storage admin I would for example not only be interested in peak problem but I would love to see what happened before that or if there is a noisy neighbour messing with everything.

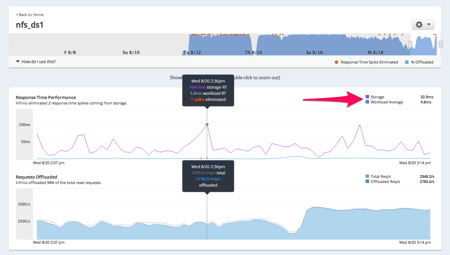

Having all that information, Infinio is now adding some dynamic monitoring and reporting views in the management console. Here’s the basic example of the monitoring of a single datastore. Where you see the arrow, you’ll notice that although the average storage latency to the backend is unacceptably high (+30ms where at 20ms things start to break) the average workload latency to the VMs is under 5ms. Even more, where you see the spike of 150ms backend latency, the VM latency remained down to 5,8ms.

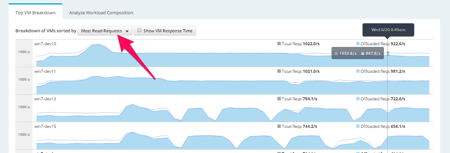

Having all that information together, knowing what VM owns what workload – remember, classic LUN based storage only knows SCSI requests – you can start doing deeper insights in the total package. Here come the Top VM Breakdown views for example. You’ll be able to scroll all the way down (but you are only interested in the top). As I have highlighted there is also a dropdown list of options to order the VMs for example on “least cacheable workload“.

One thing you can’t see in this beta-version is that there will also be a search bar at the top-right where you can search both for VMs or by keywords. That way you could have a report of Most Read Requests to all the SQL servers. Having that list ordered top-down is the information you need when the DB-admin comes asking for what’s wrong with the performance and you can tell him/her that it’s probably their query 😉

NOTE TO PRODUCT MANAGEMENT: this is reporting for the storage/vSphere admin that has access to the infrastructure only. I cannot report the results to my management/other teams if I can’t export a report to XML, CSV, e-mail, PDF, … Please add to 2.1 😉

Cache Advisor for Smart Sizing of Memory

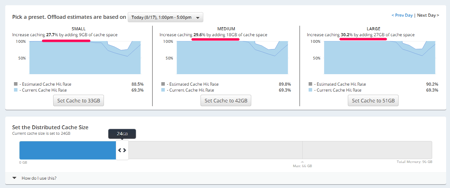

Remember where I said that Infinio will deploy 1 accelerator of 8GB per host. That was just a safe starter for V1.0 What Infinio is seeing now with their customers is that that 8GB gives them a in reality a 5:1 to 10:1 deduplication which ends up to be max of 80GB of working set per host.

In v2.0 the cache sizing advisor will give you three options to scale up that amount of GB and it will tell you how much benefit that will give you, based on a specific time-slot you choose. Based on your data-protection solution you probably wouldn’t want that period to be the backup window 😉

In this 3-node cluster below we start with 24GB RAM (3x8GB) and the advisor tells me that with that my current cache hit ratio is 69.3% but I could get that up to 88%, 89% or even 90% if I added respectively 3, 6 or 9GB or RAM per accelerator. You can also change it on a per-accelerator base by the way. And this information is not an artificial guess but calculated on polling throughout the timeline.

A good thing to know is what actually happens when you hit OK! The accelerator VM will NOT be upgraded with more RAM. The accelerator is going to be deleted and replaced with a bigger one. This is done non-disruptively to the running VMs. There is one sidenote though: during that time of delete + deploy (avg 90 seconds) you will rely on the power of the backend storage. That is actually fine as it is doing this in a rolling upgrade mode (one host at a time).

That one-host-at-a-time brings us to the second small gotcha: when you delete the first accelerator, you are removing that deduped 8GB on that host from the cache pool. As we are speaking about read cache only at Infinio that’s not really an issue as all your data is still on the backend storage anyhow. It does require you to re-heat the data that was on that host though. This only counts for the amount of cache of the first host! Somehow I think I would rather love that host not to be the one that has my SQL running 🙂

THOUGHT: I’d love to hear from an R&D, Product Management perspective why upgrading the accelerator appliance with more RAM is not possible (or not a good choice). It would make a few things smoother.

Last year at VMWorld I interviewed Arun Agarwal, CEO of Infinio. Feel free to watch here. And personally I am looking forward to what they have to show for TechFieldDay Extra on Wednesday.

Disclaimer: I am at VMWorld courtesy of VMware (free bloggers pass) and the TechFieldDay sponsors (travel & accommodation). Infinio is a sponsor of TechFieldDay and my blog.

[…] Infinio 2.0 […]

Oh, you.

😉

[…] Infinio 2.0 […]