This post is part of a series of 10 vendors that have presented their technology to the TechFieldDay attendees, gathered for this edition of Storage Field Day 4. You can find all background about the project here: http://techfieldday.com/event/sfd4/ – This series of blogposts were live notes, published at the end of the presentation but reviewed afterwards. Disclaimer: the attendees are not personally compensated other than our transport and lodging, nor are we obliged to write anything at all.

Company

- Founded: 2009 (IRL)

- Founders: Kelly Murphy (CTO), Antony Sawicki (architect), Tomasz Nowak (Engineer)

- Financial Status: A-round ($12mln), B-round to be expected soon

- CEO: George Symons

Technology

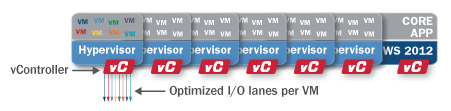

GridStore is a scale-out SCSI solution for Microsoft environments. A Virtual Controller (vC) is installed in each HyperVisor or physical server. The vC is a driver, not an agent. If you would want, you could install the vC in a VMware VM and present that storage through iSCSI to vSphere but that’s not really the use-case they are after.

The physical appliances are single processor boxes (DELL R320/R210). These appliances are grouped either by Capacity model or Hybrid Model in a “group”. In the performance nodes there are PCIe cards (550Gb Virident) for read and write(back) caching. As there are at least 3 nodes in a cluster, ‘dirty’ information has been taken care of when something goes wrong. The physical backend is regular Ethernet.

The GridStore controller (GridControl) is a single instance management center for configuring the physical machines. Once all the nodes are configured you can start creating vLUNs. vLUNs can be presented to 1 host/server or a whole HV-cluster. Currently the vLUNs are all thick provisioned. For data protection there is Read Solomon beyond RAID5/RAID6. The RAID stripes can be spread over all nodes so a 8+1 RAID5 over 9 nodes could even have a full node failure. The protocol that is used between the vC driver in the host and the physical nodes is Sockets Direct Protocol (SDP). The driver is responsible for parallel writing and there is a level of QoS involved that needs some more investigation from me.

My Take

It truly believe that the technology has a chance. However … although the appliances are small, they do have a lot of compute power and the footprint ration for capacity or compute seems pretty big today. If they keep the same model, selling these two appliances I don’t really see a big future. There are a few options in my opinon:

- Immediately getting away from selling appliances themselves. If they want to keep the controlled HCL type of selling, they should OEM with as much as possible partners. That could be blueprint architecture white-box resellers or even HP/DELL/IBM server departments.

- Both the driver and the controller are Windows software. Instead of adding all that compute power to dedicated appliances I’d rather see that compute power being added to the Hypervisor with local storage. When you do that, you see that this is a Windows SOFS (Scale Out File System) but very well done. The only problem I see here is that they would encounter Nutanix on their path.

- Lastly: sell the whole deal as software only. I know it is a lot harder to sell software than appliances from a startup perspective but I still believe in the “build-your-own-datacenter” architectures. I know there are a lot of datacenter architects that don’t like the limitations of pre-configured appliances that only sell through a limited OEM channel.

Links

- website – http://www.gridstore.com

- twitter – @gridstore

Video

Here are the 3 parts of the Storage Field Day 4 presentation: Gridstore Background / Software Defined Storage / Demo