This post is part of a series of 10 vendors that have presented their technology to the TechFieldDay attendees, gathered for this edition of Storage Field Day 4. You can find all background about the project here: http://techfieldday.com/event/sfd4/ – This series of blogposts were live notes, published at the end of the presentation but reviewed afterwards. Disclaimer: the attendees are not personally compensated other than our transport and lodging, nor are we obliged to write anything at all.

Company

- Founded: 2010

- Founders: Felix Xavier (CTO) & Umasankar Mukkura (VP Engineering)

- Financial Status: B-round

- CEO: Greg Goelz

Technology

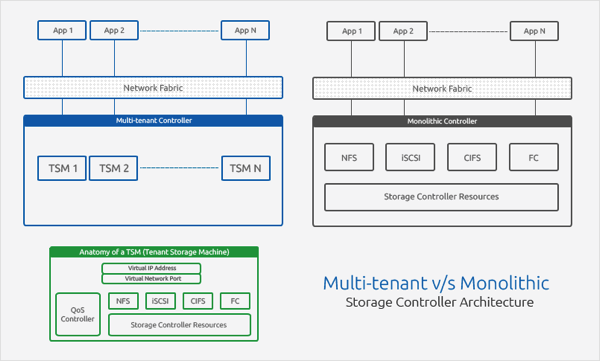

Elastistor are Storage Nodes that handle the workload coordination. On top of that there is the ElastiCenter which is basically the PlugIns for Stack and Virtualization management. The modules in the ElastiCenter include Alerting & Monitoring, RealTime Management Engine. Statistics Capture & Aggregation, Network Communications. Everything can be controlled through a REST API. The Tenant Storage Machine (TSM) is the component that will actually handle the QoS.

The FrontEnd connectivity is NFS/CIFS (SMB)/iSCSI on default, Fibre Channel is an Add-on module. The backend is based on ZFS (on FreeBSD) so basically any locally attached storage. There are 2, 3 or 4 nodes per HA-Cluster but you can add multiple cluster in a single management group. They clusters are active/active. Every pool of storage is “owned” by one of the nodes/controllers. I wrote a basics on ZFS/RAID-Z last year after a visit to Nexenta whom actually use the same technology. As part of being ZFS based, it also supports the built-in ZFS deduplication and compression. It has been brought to my attention I need to make another ZFS basics on that 😉

The magic from CloudByteInc goes into the QoS (Quality of Service). You can limit the amount of IOPs per tenant. When a new pool gets installed there will be default levels of available iOPS that are queried on the hardware but can be manually altered. The limitations are twofold: hard limits on max available iOPS or soft limits with grace space for shared capacity. All the limits are set on iOPS, not on percentages. For NFS, the limitations can be set on the VM-level. For all other workloads it’s on the volume level. When moving a workload from one pool to another the history of the workload is has being taken into account so you don’t have to start measuring from scratch. This only counts for migrations that are done by the ElastiStor, not by the Virtualization Admin.

Currently supported platforms: VMware vSphere / XEN server / OpenStack / CloudStack. There is also an SRM plugin.

My Take

This definitely is a very young product. I have a hard time believing this is disruptive enough to actually make it in the long run. Basically it doesn’t do anything more than putting an iOPS cap on volumes. This is something the dev team at Nexenta can add to their products in 2 weeks R&D if it’s not in there already. I am missing a lot of features to make this actually a real QoS system. The biggest example is that there are only those imitations and that there are no priorities. Other things like total aggregate iOPS instead of iOPS per volume would be nice, more granular control per VM or workload instead of per volume and dynamic monitoring of the actual iOPS behaviour. So for now, NO. Let’s see what happens in the near future.

Links

- website – http://www.cloudbyte.com/

- twitter – @CloudByteInc

- trial download: http://www.cloudbyte.com/downloads/

Video

Here are the 4 parts of the Storage Field Day 4 presentation: Introduction / Technology Overview / Demonstration / ChalkTalk